Robots start learning

Ingeniously designed machines learn to move without receiving any instructions from control programs. Similarly, robots are learning about their bodies and their environment.

Text: Stefan Albus

That’s supposed to be a robot?” Parents who show their science-fiction-loving children an industrial robot for the first time are probably quite familiar with such questions. No wonder: these complex machines are not at all reminiscent of the cinema hero C3PO from the film Star Wars, or the visions we may have of the domestic robots of the future. What’s more, even the very latest mechanical helpers that neatly place little plastic caps on trays in a factory are basically brainless. If the tray feed snags, it will let all the plastic objects entrusted to it fall without reacting.

For researchers like Nihat Ay and Ralf Der at the Max Planck Institute for Mathematics in the Sciences in Leipzig, there is a simple reason for this: “Robots today are still highly rule-based systems,” says Ralf Der: they simply implement predefined programs. And we attempt to predefine effective responses on their behalf for every eventuality. Ultimately, however, this approach makes robots rigid and inflexible.

Highly developed machines can now perform ballet on their own, climb stairs, and even react to laughter and language – but strictly in accordance with the program. Consequently, they inevitably fail at some point, as the world is too complex to fit into any rulebook: if you want to make a stair-climbing robot falter, all you have to do is put a brick on one of the steps. That is why, when it’s essential to get everything right, for example on space missions, engineers rely on remote-controlled machines. Such difficult missions would require robots that can adapt deftly to their environment and can solve problems autonomously.

This is precisely what is on the minds of researchers like Nihat Ay and Ralf Der. In Ralf Der’s words: “You can train children by telling them exactly what they should do. This is the rule-based approach. However, you can also observe what they are best at and foster this activity.”

But how is a robot that, without its control programs, is little more than a pile of metal, supposed to display behavior that is worth fostering? In the opinion of Der and Ay, this is precisely the misconception that has misguided the advocates of so-called strong artificial intelligence (AI) for years. Instead, in his view, the solution lies in such concepts as self-organization and embodied intelligence.

Biological evolution as a model for the robot school

Researchers have been using self-organizing systems to train robots for certain tasks for years. For around two decades, they have been using the principles of biological evolution to develop robots that are extremely well suited to the execution of simple tasks and whose movement is getting better and better – on the computer, at least. However, controlled evolution in the direction of a simple predefined target has always produced comparatively simple beings: the testing of all possible mutants is too complicated. “Even the most primitive of organisms can still do more than the best robots,” says Der.

The standpoint of the supporters of rule-based AI was further undermined by the development of machines like the passive walker, which was presented by a research group from the Cornell University Human Power and Robotics Lab in the 1990s. The passive walker basically consists of a frame, welded from a few pipes and joints, that can stride down a sloping surface in an astonishingly naturalistic way without the help of any motors and, above all, without any nervous system. When placed on a sloping path, one leg of the frame falls forward and intercepts the fall. This movement triggers the next fall, which causes the other leg to move forward, and so on. “The designers simply used the ‘intelligence’ inherent in the design – its embodied intelligence,” explains Der.

Of course, in the best case, the passive walker comes to a standstill when the slope ends. “But motors could be added to the walker that assume the function of the slope,” says Der. In other words, to give the frame a push at the right time, causing it to fall forward. “There is often an inherent cleverness in things that can be exploited,” says Der. Further progress could be achieved in this area if the embodied intelligence of sophisticated machines were to be combined with modern computer science to establish a kind of embodied artificial intelligence, and the entire thing were then spiced up with a healthy dose of self-organization. This is precisely what Nihat Ay and Ralf Der set out to do in Leipzig.

“The representatives of ‘strong AI’ actually thought for a long time that there exists something akin to an intelligence that is detached from the body,” says Ralf Der. “We now know, however, that such intelligence only rarely exists in a pure form.” Metaphors such as “there must be a way out” show Der that even the search for abstract ideas is linked with ideas from the physical world: “Intelligence developed in conjunction with the body. It is not possible to separate the two.”

On the screen of his notebook computer, Der shows machines whose brains develop on their own and are able to adapt to their own bodies. In the video of a virtual but physically realistically simulated world, a cylinder rolls across a surface, propelled by two internal servomotors that drive two massive spheres back and forth along two axes. The cylinder initially rolls slowly, then ever faster as it makes more effective use of the weights. It brakes suddenly to move in the opposite direction. “We did not predetermine any of this. The cylinder’s brain itself is testing the possibilities its body offers,” explains Der.

Just one neuron per motor

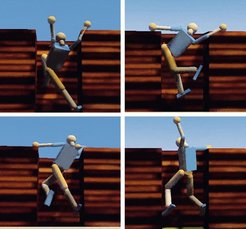

The next video shows a robot in a pit that is approximately the height of a human being. It starts moving like a drunken breakdancer, but with time, the movements become more structured, more coordinated, and more targeted. “The brain of this robot is adapting to both its own body and its environment,” says Der. At some point, the figure actually manages to move in a way that enables it to easily liberate itself from the pit.

All of these models contain essentially the same brain: an arrangement based on two very simple neural networks – a controller that operates the motors and a network the researchers refer to as a self-model. In principle, each of the motors in each of the two networks has only a single neuron assigned to it, yet thanks to Der’s subtle algorithms, this simple configuration generates astoundingly lifelike behavior. But the simulated robots are not particularly clever. They learn everything they know about themselves and their environment from their bodies’ own sensors. In the case of the robot in the pit, for example, they report the angle between the relevant limbs that form its 16 joints – and nothing more. The neural controller network receives these sensor values and uses them to calculate control signals for the motors.

The self-model is given the following task: “Consider which angles are likely to be reported when the motors execute these signals.” In simplified terms, a superior task – the so-called learning rule – states: “Ensure that the discrepancy between your expectations and the reported values is as small as possible.” If, for example, the robot tries to bend an arm back beyond the elbow, the deviation between target and current states is too large. If this happens several times, the neural network adapts – it learns that one cannot bend the arm beyond an angle of 180 degrees. If the robot repeatedly strikes a wall as the result of a movement, it will eventually internalize this experience.

However, its brain will forget this again quickly, as 16 neurons can’t remember very much. Nevertheless, the virtual cocoon is able to grow into its body gradually and to obtain a very rudimentary image of itself and its environment – and to behave accordingly.

But this naive version has one problem. At some point, even the most primitive of these brains realizes that it is also fulfilling the learning rules when it does nothing – when it does not move, the discrepancy between the predicted and current status is zero. Der stumbled on the remedy to this depression through his work as a physicist at the University of Leipzig, where he was working on the consequences arising from the rigid direction of the arrow of time.

A reversed arrow of time generates movement

In neural networks, in contrast, the arrow of time can be reversed. Thus, with the self-model, a dynamic can be modeled in reverse time. The controller then learns in a time-reversed world. Of course, it learns that doing nothing is the best solution in that world, too, and it will also try to convert movement to repose. However, “in the real world, this will trigger the exact opposite in temporal terms, so it will generate movement from repose” – like rewinding a film in which a rolling ball comes to a standstill. In this way, the robots remain in constant action – with no further motivation as would be required in a rule-based system.

In order to induce the robot to perceive a time-reversed world, the researchers allow its brain to gather current sensor data. It should now deliver a prognosis as to the values that could have led to this state – so they essentially allow it to look back instead of forward. The network is thus prompted to believe the current measurement values to be future events, and to derive the previous values from them. As a result, the time arrow is reversed. In order to test the present model, the prognosis must merely be compared with the already available sensor values. The model is then adapted to the known learning rules as before.

Again, the self-model prevents robots that have been dispatched into the future in this way from moving in a completely hyperactive way. After all, even if the interaction of the networks and motors were ruled by an overly chaotic zest for action, the sensor reports would frequently no longer suit the prognoses of this neural network. Chaos is very far removed from the ordered structures this model must establish to be able to develop a prognosis in the first place. However, between chaos and repose lies more or less directed motion.

The new concept also ensures that Der’s robots engage in increasingly complex behaviors entirely without prompting – even if the simpler movements are learned and nothing new is added, this is equivalent to a neuronal standstill. Thus, with time, the robots extend their option space and try out somersaults, headstands and basic climbing movements – behavioral patterns that could hardly be programmed with such elegance using rule-based processes.

However, no equivalent has yet been found in the animate world for the trick with the reversed arrow of time. Thus, any attempt to make the programming of robots easier in terms of natural models must adopt different approaches. This is where Nihat Ay, who is developing new methods for embodied artificial intelligence with Ralf Der, gets involved. “Nature always wants to optimize energy and information flows,” says Ay. “A bird can catch an insect in flight because it has optimum control over its muscles.” The researchers wanted to derive a learning rule for neural networks that will prompt robots to develop behavior patterns from this principle.

In order to implement this idea, Ay and Der use a mathematical measure – known as predictive information, or PI – that describes how much information from the past can be used to predict the future. A steep PI slope represents a high information flow. This value is usually deduced using tedious algorithms from time series and is thus of no use for robots that must learn quickly.

However, Ay and Der found a way around this: they derive a PI estimated value with the help of the neuronal self-model, which already incorporates a rough idea of the sensor responses to be expected – so it describes the future expected by the robot. Thanks to this numerical value, the artificial brain can actually try to numeralize, predict, and optimize the information flow in the sensomotoric loop – that is, from and to the sensors and motors. In other words, the brain constantly attempts to behave as effectively as possible.

High information flow arouses robot curiosity

The result is promising. Initial analyses actually show that the learning rule “Ensure the highest possible information flow in your sensomotoric loop” prompts similar behavioral patterns in the computer simulations to those achieved by the reversed arrow of time. Because the divergence between the controller status and the self-model’s prognosis is too great, the robots try to move (increasing PI) without becoming hyperactive. One could also say that the robots become curious and try to describe their world as reliably as possible.

In order to see how these brains cope in the real world, they must be given a real body. Ralf Der has also long had a chip in his desk drawer that merely needs to be connected to sensors and motors in order to “learn its way around” them. “Robotics sets out to resolve problems on many levels – from the soldering points to the software architecture,” says Michael Herrmann, a long-time colleague and cooperation partner of Der, who recently moved from the Max Planck Institute for Dynamics and Self-Organization in Göttingen to the University of Edinburgh, where he is carrying out research on prosthetics and biological movement control.

“In other sciences, you can suppress the boundary areas of a four-dimensional reality. In robotics, we have to deal with them,” says the scientist, who commands an outstanding knowledge of the hardware dimension of robotics and is now applying the described learning rules in the field of neuro-prosthetics. Robot designers often have to build their machines with limited resources. “Live nature, however, is also very successful in finding ways to assist components that are not optimal,” says Hermann. Human beings are not really designed for upright movement, for example.

Despite the unresolved problems that remain, we already encounter robots in everyday life. Vacuum cleaners that move autonomously over dirty carpets, for instance, and the first driverless cars are battling their way across desert trails in car model tests. We just need to get away from the idea of humanoid machines. Michael Herrmann does, however, see the high energy needs of such machines as problematic: “We will not be able to afford this in the future.”

Robots with personality

Back to Der’s laptop. After several minutes, the walled-in robot has learned a number of movements with which it could easily liberate itself from its predicament, but does not do it. As soon as it has a hand and a foot over the wall, it apparently finds something else in the box that is more interesting, and soon forgets that there is a world outside the pit. It persists in its present. Is this what autonomous, target-oriented behavior looks like?

“It is still too early for that,” says Der. “Right now, we are simply trying to get robots to act autonomously with as little effort as possible.” Ay and Der are thus creating a kind of body feeling for the robots as a start, advancing one of the two dominant schools of thought in the field of AI. The proponents of the other paradigm are trying to train their machines to complete tasks as well as possible by rewarding the neural networks for a job well done. “In contrast, we first want to see what our robots can do, with a view to potentially making use of these capacities later,” explains Ralf Der. This approach is known as task-independent learning.

To date, the link between the two parts was missing: the guidance – a superior instance in the robot brain that would evaluate, store and retrieve information when required. If Der’s virtual figure had guidance, it would actually be able to use its capacities at some point to climb out of its pit – or, much later perhaps, to reach earthquake victims as a real robot carrying medical aid packages.

Self-organization is not enough for this purpose; its productive powers must be exploited by channeling them gently in the desired directions. This objective is being pursued by a number of researchers across the globe; they recently presented a program for its realization at an international workshop in Sydney and agreed on a corresponding cooperation structure (The First International Workshop on Guided Self-Organization, “GSO-2008”). They want to provide the brains of robots with long-term memory that would keep potentially useful behavior in store and intervene in events on a steering basis. Georg Martius at the Max Planck Institute for Dynamics and Self-Organization in Göttingen had the first groundbreaking insights in this regard.

Ralf Der is currently also thinking about so-called echo-state machines. These are, effectively, conglomerates of two large networks, one of which – interpreted by a superior selection layer – is in a position to provide intermediate storage for complex series of movements.

Nihat Ay and Ralf Der believe that robots will become increasingly autonomous, willing to learn, flexible and natural. These characteristics are essential for robots that are expected to climb through tunnels, carry out experiments on planets autonomously, and perhaps even care for elderly and sick people. Autonomous robots will not only solve problems independently one day, they will also have a kind of personality. “It is entirely conceivable that we will be able to recognize individual robots from their gait – we can already sede that the history of its neural network determines how the robot moves during the learning phase,” says Ralf Der.

GLOSSARY

Embodied intelligence

The capacity of a structure to optimize its movements on a purely mechanical basis.

Embodied artificial

intelligence A modern research field that combines the “intelligence” of a structure with information technology methods.

Controller

One of the two neural networks on which embodied artificial intelligence is based: receives signals via the sensors and uses them to calculate control signals for the robot.

Self-model

The other neural network: predicts which values the sensors measure when the motors execute a control order.

Learning rule

Minimizes the difference between the predicted values of the self-model and the measured values of the controller.