Musical rhythms in the brain

Researchers find neurological notes that help identify how we process music

Researchers at Max Planck Institute for Empirical Aesthetics in Frankfurt and of New York University have identified how brain rhythms are used to process music, a finding that also contributes to a better understanding of the auditory system. Furthermore, the study suggests that musical training can enhance the functional role of brain rhythms.

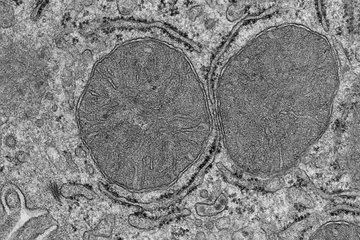

The paper, which appears in the journal Proceedings of the National Academy of Sciences, points to a newfound role the brain’s cortical oscillations play in the detection of musical sequences. The term “cortical oscillations” refers to the rhythmic electrical activity generated spontaneously and in response to stimuli by neural tissue in the central nervous system. The importance of brain oscillations in sensory-cognitive processes has become increasingly evident.

“We’ve isolated the rhythms in the brain that match rhythms in music,” explains Keith Doelling, lead author. “Specifically, our findings show that the presence of these rhythms enhances our perception of music and of pitch changes.”

Not surprisingly, the study found that musicians have more potent oscillatory mechanisms than non-musicians do. “What this shows is we can be trained, in effect, to make more efficient use of our auditory-detection systems,” observes study co-author David Poeppel, director of the Max Planck Institute for Empirical Aesthetics. “Musicians, through their experience, are simply better at this type of processing.”

Previous research has shown that brain rhythms very precisely synchronize with speech, enabling us to parse continuous streams of speech — in other words, how we can isolate syllables, words, and phrases from speech, which is not, when we hear it, marked by spaces or punctuation.

However, it has not been clear what role such cortical brain rhythms, or oscillations, play in processing other types of complex sounds, such as music.

To address these questions, the researchers conducted three experiments using magnetoencephalography (MEG), which allows the tiny magnetic fields generated by brain activity to be measured. The study’s subjects were asked to detect short pitch distortions in 13-second clips of classical piano music (by Bach, Beethoven, Brahms) that varied in tempo — from half a note to eight notes per second. The study’s authors divided the subjects into musicians (those with at least six years of musical training and who were currently practicing music) and non-musicians (those with two or fewer years of musical training and who were no longer involved in it).

For music that is faster than one note per second, both musicians and non-musicians showed cortical oscillations that synchronized with the note rate of the clips. The researchers therefore conclude that these oscillations were effectively employed by everyone to process the sounds they heard, although musicians’ brains synchronized more to the musical rhythms. Only musicians, however, showed oscillations that synchronized with unusually slow clips. This difference, the researchers say, may suggest that non-musicians are less able to process the music as a continuous melody rather than as individual notes. Moreover, musicians are able to detect pitch distortions much more accurately — as evidenced by corresponding cortical oscillations.

Thus, brain rhythms appear to play a role in parsing and grouping sound streams into ‘chunks’ that are then analyzed as speech or music, the scientists add.